Stephen Hawking spent decades warning that humanity faces a simple choice: spread beyond Earth or risk total extinction. The theoretical physicist argued that threats ranging from artificial intelligence to asteroid impacts made the planet too fragile a home for a single-planet species. His repeated, escalating warnings about the timeline for that escape have only grown more relevant as AI development accelerates and space colonization remains largely aspirational.

Hawking’s Blunt Warning on Artificial Intelligence

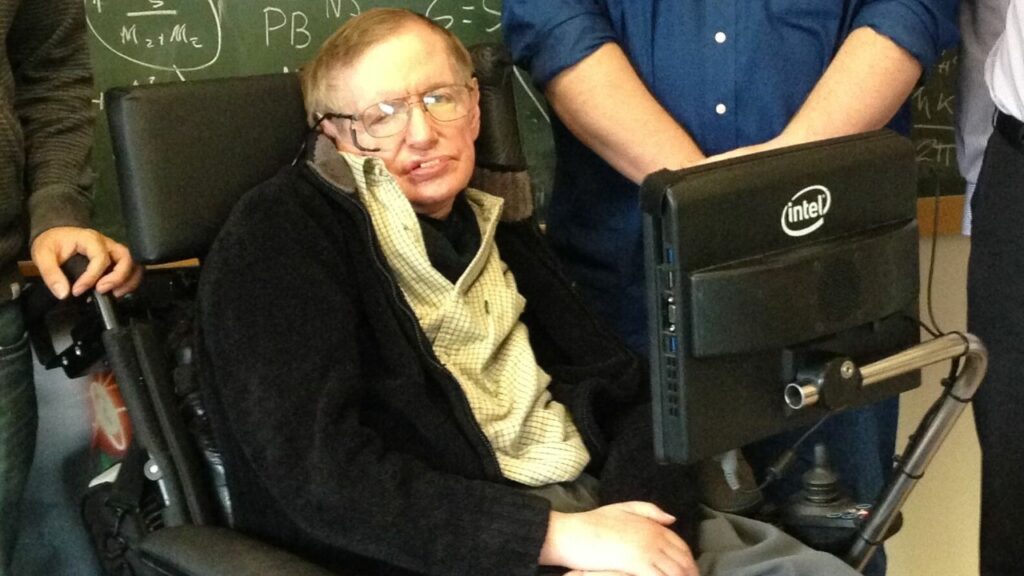

Among the existential dangers Hawking identified, artificial intelligence sat at the top of the list. In a late 2014 interview setting, the physicist stated plainly that the development of full AI could end humanity. The comment was not a throwaway line. Hawking framed AI as a technology that, once it reached a certain threshold of self-improvement, could evolve faster than biological humans could adapt or control. BBC Technology correspondent Rory Cellan-Jones later described his remarks as a serious warning from one of the world’s most respected scientists, and it drew sharp reactions from both the tech industry and the academic community.

What set Hawking apart from casual doomsayers was his reasoning. He was not worried about robots turning evil in some cinematic sense. His concern centered on the gap between human cognitive speed and the potential pace of machine intelligence. A superintelligent system optimizing for goals that do not align with human survival could, in his view, outmaneuver every safeguard humans put in place. That framing has since become central to the AI safety debate, adopted by researchers and policy advocates who argue that alignment between machine objectives and human values is the defining technical challenge of the century.

Why Hawking Said Earth Is Not Enough

AI was only one thread in a broader argument. Hawking consistently maintained that Earth itself is a single point of failure for the species. In a 2016 statement, he declared that humanity has no future without going to space. That sentence captures what might be called his one rule for avoiding extinction: become a multi-planetary civilization before any single catastrophe, whether engineered or natural, wipes out the only planet humans inhabit. For Hawking, the fragility of Earth’s biosphere, its exposure to cosmic hazards, and the unpredictability of human politics all pointed to the same conclusion.

According to a BBC retrospective on Hawking’s predictions, he was particularly focused on low-probability but catastrophic events as drivers of that urgency. A large asteroid striking the planet is the classic example: unlikely in any given decade, but statistically inevitable over a long enough timeline. Climate change, nuclear conflict, and pandemics rounded out his catalog of threats. None of these risks, Hawking argued, could be fully eliminated. The only durable insurance policy was geographic redundancy, placing human populations on more than one world so that no single disaster could end the species entirely.

A Shrinking Deadline for Escape

Hawking did not keep his timeline static. In earlier comments highlighted by the BBC, he suggested that humans needed to leave Earth within the next thousand to 10,000 years to ensure long-term survival. That window, while urgent by geological standards, still allowed for gradual progress in rocketry, habitat design, and planetary science. It implied that humanity had centuries to build the industrial capacity, life-support systems, and political will necessary for permanent off-world settlements.

But Hawking later revised the estimate sharply downward. By early 2017, according to reporting in the Washington Post, he warned that the human species would need to populate a new planet within 100 years if it is to survive. That dramatic compression, from millennia to a single century, reflected his growing alarm about the speed at which AI capabilities and other technological risks were advancing, along with mounting environmental pressures. The conflict between these two timelines is itself telling: it suggests Hawking saw the threat picture worsening faster than mitigation efforts were progressing. Whether the true deadline is 100 years or 10,000, the core logic remains the same. A species confined to one planet is playing a losing long game against accumulating risks.

The Gap Between Warning and Action

Hawking’s warnings carry a built-in tension that most coverage of his statements glosses over. He identified AI as one of the primary extinction-level threats, yet reaching other planets almost certainly requires advanced AI systems for navigation, life support, and resource extraction. The very technology he feared most is likely essential to the escape plan he prescribed. That paradox has no clean resolution. It means the species cannot simply avoid AI development; it must instead develop AI carefully enough that the technology serves the goal of expansion rather than becoming the catastrophe that makes expansion necessary.

Current space programs illustrate the scale of the gap. No government or private company has yet placed a permanent human settlement beyond Earth. Mars missions remain in planning and testing phases, with timelines that stretch years into the future even under optimistic projections. Meanwhile, AI research has moved at a pace that would likely have alarmed Hawking further, with increasingly capable systems deployed in industry and consumer products long before robust safety regimes are in place. The asymmetry between rapid AI progress and comparatively slow space infrastructure development underscores his concern that the most dangerous technologies are arriving faster than the safeguards or escape routes needed to manage them.

Living With Hawking’s Dilemma

Hawking’s core message leaves humanity with a dilemma rather than a clear roadmap. On one side is the imperative to reduce existential risks on Earth: limiting nuclear arsenals, slowing climate change, and building stronger public health systems. On the other side is the equally urgent push to develop the rockets, habitats, and closed-loop life-support systems that would make off-world colonies viable. Both tasks compete for attention, funding, and political capital, yet his argument implies that neither can safely be neglected. Reducing risk without expanding outward leaves the species exposed to rare but terminal events; expanding without managing risk raises the odds that we will not survive long enough to use the lifeboats we are trying to build.

In practice, living with Hawking’s dilemma may mean treating AI and space technology as linked rather than separate domains. The same advanced algorithms that threaten to outstrip human control could, if properly constrained, help design safer spacecraft, optimize resource use in hostile environments, and monitor Earth for emerging dangers. Hawking’s warnings do not demand abandoning technological progress; they demand a more deliberate version of it, in which the question “Does this make extinction more or less likely?” is asked as routinely as cost or performance. His shrinking deadlines were not precise forecasts so much as a way of forcing that question into the center of public debate, and of reminding a still single-planet species that time may be shorter than it thinks.

More from Morning Overview

- ‘Fail to shut down’: Microsoft issues urgent Windows update alert

- ‘The road’s gone’: 1,000 trapped on Outer Banks as highway vanishes into sea

- Older Teslas are wearing out in ways owners never saw coming

- Apple issues a major warning to 800M iPhone users right now

*This article was researched with the help of AI, with human editors creating the final content.